Multimodal AI

Built a library of multimodal generation patterns now used across Nano Banana, Gemini, Genie, Whisk, and Pomelli.

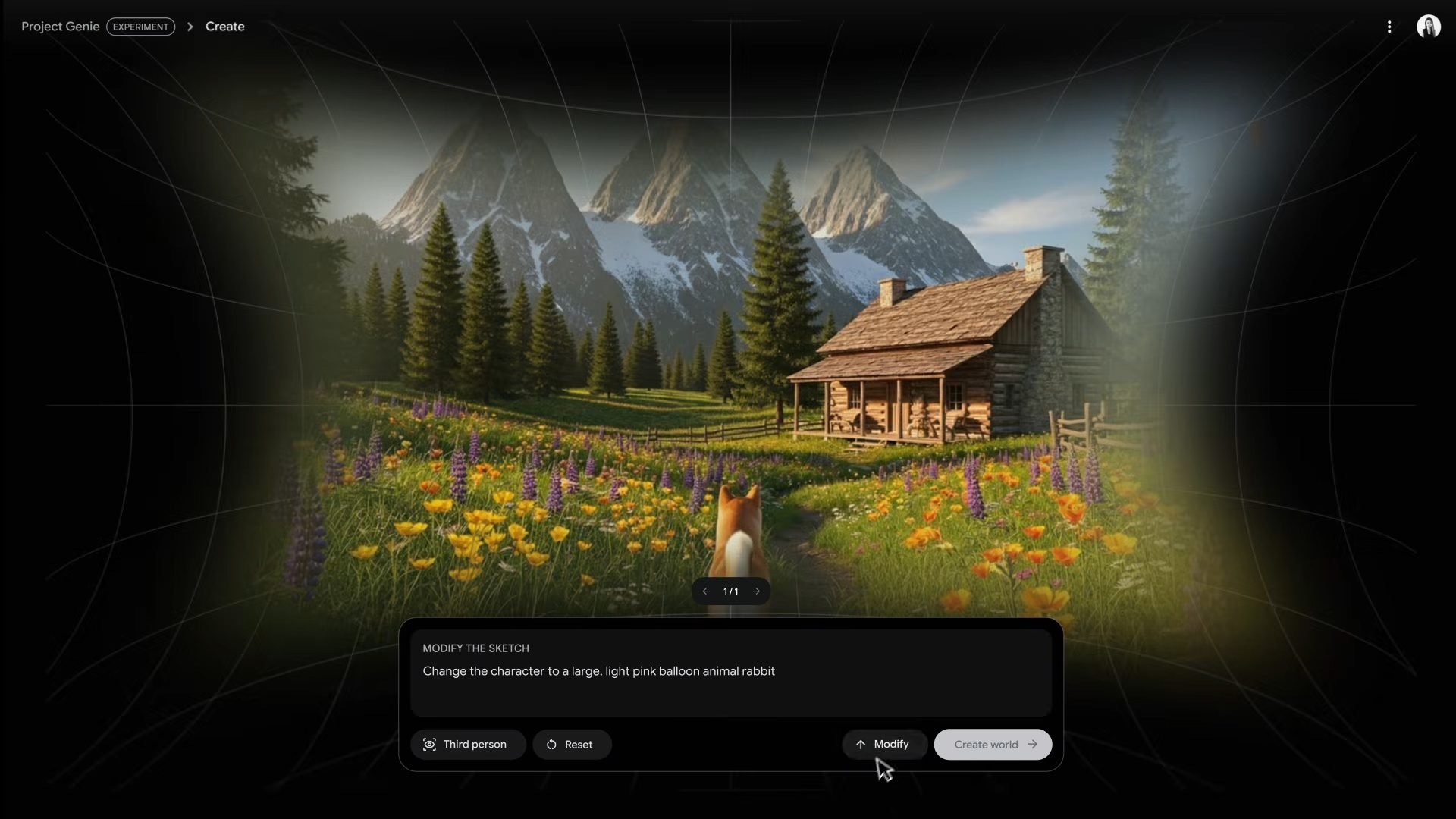

Design preview

New patterns for image generation

Most AI users struggle to write prompts. What if you could prompt with more than words?

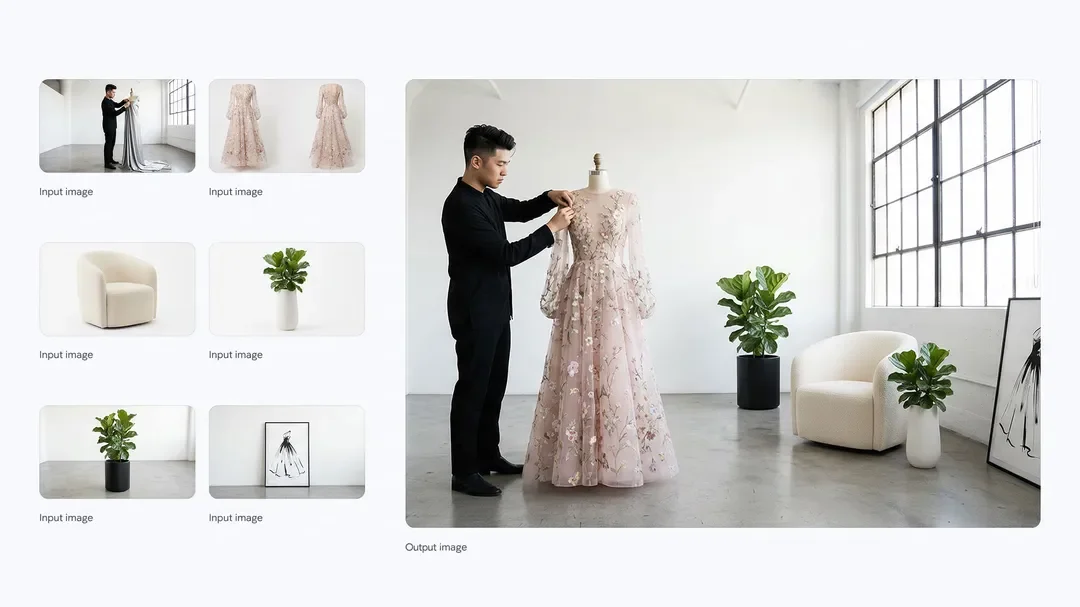

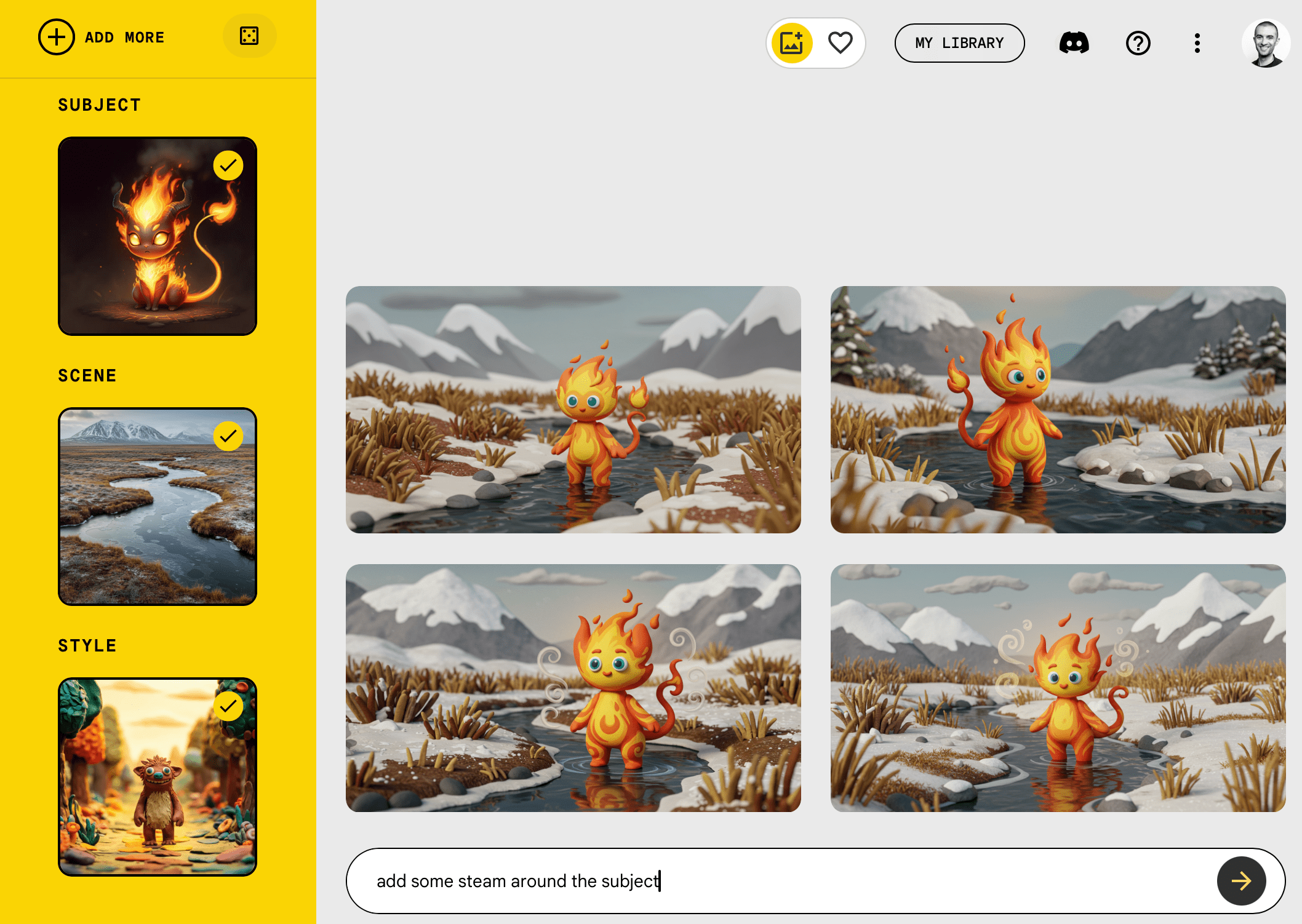

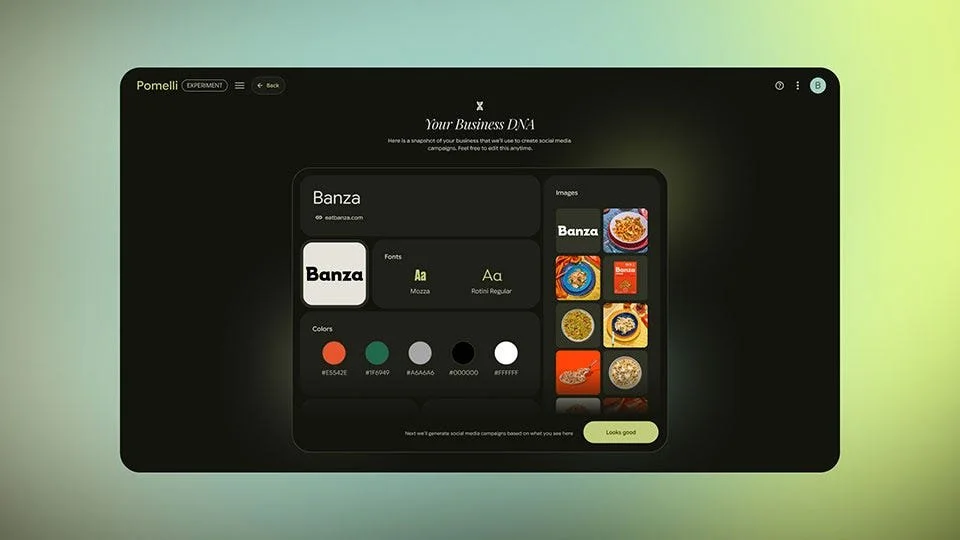

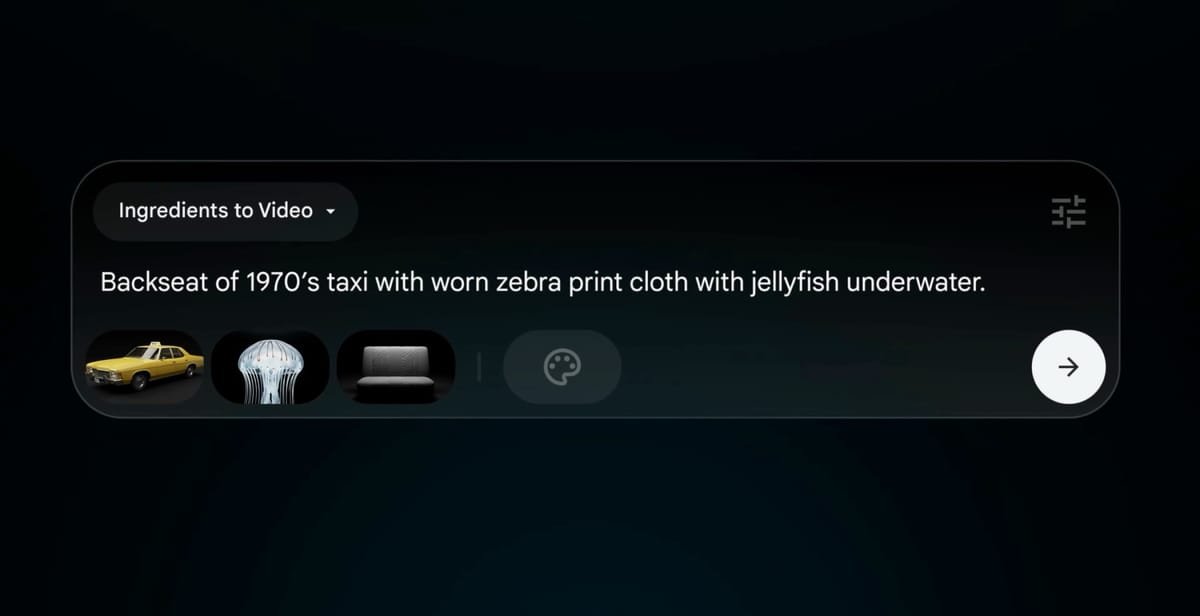

Over six months, I helped Google identify and refine a library of new interaction patterns. Using iterative global research, I crafted new prompting and editing affordances that empower users to prompt with specificity and correct inherent biases.

We built new flows for professionals, who need precise controls for consistent characters and brand assets

My role

I lead the client relationship and was the primary IC across all activities, from workshops and global research, to design, synthesis, and socialization.

My work brought together teams across Google including Deepmind, Labs, Gemini, Research, YouTube, Search, and Ads.

Team + timeline

Design lead (me)

Jr. Researcher

Strategy advisor

10 months

Activities

Research

Expert interviews

Landscape audit

Opportunity definition

Global recruiting

Generative IDI’s

Insights & synthesis

Strategy

Ideation workshop

Strategic frameworks

Product Design

Concept generation

Stimuli development

Flow design & prototyping

Product testing

Design toolkit

Pattern & token development

Development

Prompt engineering

Model architecture design

Confidential work.

Details are available on request